Good code by bad robots

For AI-assisted coding, pasting code into a chat interface simply isn’t going to cut it anymore. I’ve been using Cursor - an AI-first IDE - in a professional capacity for close to a year now, and Claude Code for personal use for a few months. If wielded incorrectly, these tools will allow you to write plausible looking but unmaintainable code at breakneck speeds, and your coworkers will curse you for the millions of lines in your PRs that feel less like coherent code and more like an attempt at industrial sabotage.

Not using them isn’t an option either - Even if you want to eschew generating code and keep that hand-made feel for your side projects at home, at work you will eventually want to write good code faster, and generally to keep an open mind about the whole “learning to code with LLMs” shtick in case the robots really take your job in the future, which has a worrying non-zero probability as of 2026. Let’s get started.

For a long time, the job of our tools has been to produce deterministic output. But suddenly LLMs come along and present us with an entirely new paradigm: There is this black box you can put any text in and get some more or less reasonable paragraphs out of, and the process that decides what word follows another is entirely probabilistic.

Just like any other tool, the output you get depends on your input. Put enough failed previous attempts in your prompt, and the LLM will start to doom spiral . If you tell the LLM to format its output in the style of Terry Pratchett or as Base64 , it will. And oh boy, that means that there are infinitely many possible outputs now! Your monkey on a typewriter hitting the keys at 3,000 WPM is capable of producing Hamlet - but in the worst case, also new and exciting versions of the worst code you’ve ever read.

Unfortunately, you are not really in a business where it is beneficial to produce lots of manure, really fast. Instead, you want to produce good code, roughly defined by the following metrics:

- It works, and does what it’s supposed to do.

- It solves the correct problem with the minimum amount of code required.

- It’s future proof, and meets your project’s standards in documentation, tests, security, usability and all the other little things your boss generally expects of your work without it being explicitly mentioned in your job description.

As of March 2026, agentic coding tools are super good at the first item , just fine at the second, and absolutely atrocious at the third unless you aggressively micro-manage the LLMs into doing so. Fortunately, there are three major ways to improve the quality of our output and the speed at which we get it.

Improving the function

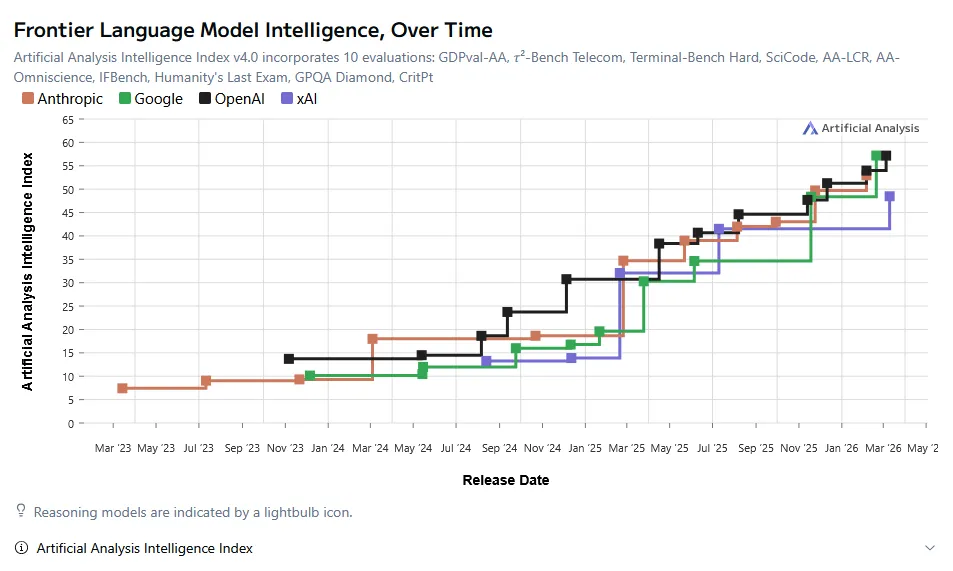

Seems like a lot of people are making sure AI moves fast nowadays. The frontier labs are releasing new models every two to four weeks now, sometimes on the same day, just to steal the spotlight. I can barely get through a system card before some shmuck on the internet already has an opinion on the newest Opus. What’s worse, comparisons between SOTA models of different providers are starting to sound very opinionated - They are all so similarly good at solving your coding problems now that whether you trust OpenAI or Anthropic with your money can largely come down to what personality you want your coding assistant to have.

But still, you can’t exactly not keep up with what the frontier labs are doing. I routinely read people’s opinions on AI on the internet where it feels like they decided their stance on LLMs somewhere around the release of GPT3 and never really updated on it. Some people are laughing about how stupid AI is based on the unhelpful answers they get from an old Gemini Flash model on Google Search. If I simply told you in this essay what the best model was and how you could maximize your performance with it, my writing would be outdated by Wednesday next week.

The problem is made worse by the high influx of open source models you can self host if you happen to have two spare GPUs fit for a data center, and the staggering amount of AI tools - Cursor, Claude, Codex, Gemini CLI, and 78 others I didn’t bother to list. There’s just too much stuff out there you could try out, and by Sturgeon’s Law, 90% of it is crap. Fortunately, there are only two rough guidelines you have to follow for a successful experience:

-

Get an agentic coding tool. It doesn’t really matter which. If you’re already using one, great. If you don’t, choose among the most popular ones - in March 2026, that’s Claude Code (by Anthropic) , Codex (by OpenAI) , or Cursor (by Cursor) . If you are using software by someone whose marketing share doesn’t depend on how good their AI-assisted coding experience is, proceed with caution. If your current approach to integrating LLMs into your workflow is asking ChatGPT questions about code you pasted into the chat window, stop. Please don’t.

-

Select the best available model you have access to. If you’re using Claude Code, that’s Opus. For Codex, the latest GPT model. For Cursor or any other tool that lets you select from multiple providers, any of the above. In 2026, almost any LLM can write functional code, but the ability to reliably architecture software is still missing in older/smaller models. Don’t start with self-hosting. Even though it is the most expensive option, you will want to use the best possible tool for the job until you’re close to your usage limit, at which point you can try to get a feel whether cheaper models can complete your work at the same level of quality.

Over time, you will learn what models work best for your use case, what amount of sycophancy you can put up with, and how autonomously you want the model to work.

Improving the input

Now, you want your SOTA model to produce good output, correct? Better give it a comfortable life. Better let it roam free-range. Stock up on the good caviar.

What model you choose is one thing, but what kind of harness you put around it - i.e. the instructions & tools you give it - is just as important. Every token you give the LLM matters. Every single one. Rephrasing prompts that convey the same intent but express it in a slightly different way may drop the model’s performance up to 61.8% . Giving the AI irrelevant context significantly degrades the output . If you tell a modern LLM to emulate an expert persona, it gets better at reasoning but worse at coding .

This also means that in order to produce good code with LLMs, you have to learn to communicate well. You should give it as much information as required to complete its current task and not a single irrelevant line more. If you want a program to print text in a certain color, you need to tell the LLM which one because, just like any other individual, it cannot look into your head and deduce that you want it in blue.

When you start out with agentic coding, you’ll have to figure out a balance of how detailed your instructions should be. Jon Skeet is the all-time highest reputation user on Stack Overflow , a legendary C# programmer and has helped countless coders make sense of their bugs. Every time I’m unsure of what I should put into a prompt, I ask myself: If I emailed this to Jon Skeet, would he be able to provide a solution?

-

Jon needs a clear picture of what I want. Jon probably wouldn’t be very happy if I shot him an email telling him to

plese fix the authentication. Instead, I gather relevant context first and include it in the email:parse_pass in src/auth.py is throwing an unexpected error when I do this: [...]. Here's how you can reproduce the issue: [...] Here's how you can test your changes: [...] -

Jon doesn’t have infinite time. The man works at Google, after all. He isn’t going to check every nook and cranny of my codebase, just the parts that seem relevant. If my email includes documentation for parts of the code he’s not supposed to touch, he will spend significant time sifting through the garbage instead of implementing a sensible solution.

-

Jon needs to know what metrics to hit. When you focus writing code for speed, size, or readability, you often have to trade off one for another. Jon needs to know what values I care about, and which I don’t. If I want him to make sure his changes work with my test suite, I have to tell him to run

npm run testto validate his changes. If I require the UI to adhere to some accessibility standard, I need to mention that. -

Jon can only work with the information I give him access to. When part of the solution requires finding the authentication tokens in an obscure Slack thread created three years ago, Mr. Skeet won’t be able to help without receiving an invite to that Slack organization and a small note telling him where to look.

By asking yourself the Jon Skeet question, you can also gauge what kind of problems you can readily throw at LLMs - Mainly those that are self contained, with an easily verifiable solution, and where all the information the model requires to provide a satisfying solution is freely available in text form. As you’re doing that, you will eventually come to realize a few things:

-

All improvements to the codebase are good, both for humans and for agents. It’s a bad look when you’re starting a new job and realize the README.md is 3 years out of date, the CI pipeline takes 32 minutes to complete and fails about half the time, and the build setup worryingly requires you to install an older version of Prolog. No one wants to work in a codebase like that, and your AI will chug through millions of tokens finding the one setup script that works. Fix the kind of things that make changing a single line of code a pain. Keep your build times short and your bug tickets detailed.

-

You’ll need to write a lot of documentation. No, even more than that. If you’ve ever inherited an older codebase from someone else unreachable by phone, email, or carrier pigeon, you know the pain of realizing just how much implicit knowledge can be stored in a single programmer’s brain. If you aren’t writing your stuff down (What coding standard are we following? Why is this nested for loop here?) both your coworkers and the AI will struggle. If you find you’re repeating instructions when you talk to your agent, it’s time to create some CLAUDE.md / AGENTS.md files, or an Agent Skill ( Codex , Claude Code ).

Improving the operator

If you’ve been working in this industry for a while you know that coding is the most well known part of your job description, but by far not the one you spend the most time in. A lot of the time, you’ll spend your day balancing your codebase’s wants and needs with other people. A big part of your job is saying No, let’s try something else. to your boss who wants to be able to disable 2FA when she doesn’t have her phone with her, the customer who wants a button that exports your data to functional PHP code, that one coworker that really wants to rewrite your backend in Rust.

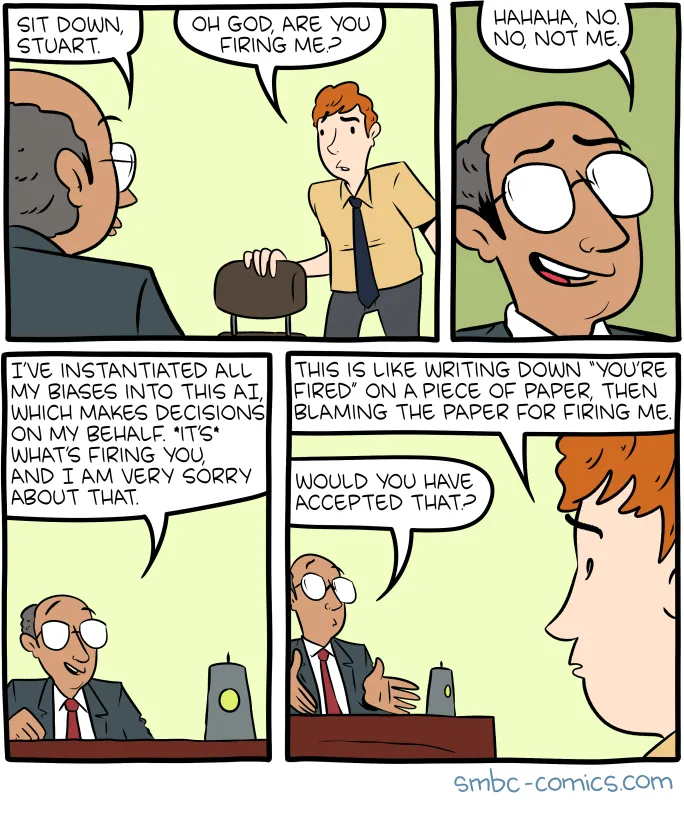

AI won’t do that. It will happily agree to any request you give it and throw its code at you five minutes later because it’s been trained to be maximally useful at fulfilling requests, not to produce maximally good code. There’s someone here who has to be a good judge of what input to give and what output to take and that person has to be you. The skills that made you a good developer before - knowing what to build, and knowing why you should build it - are the same that make you good at steering an agent. The tools may have changed, but the job sure didn’t.

-

You need competent people overseeing the work. Agents without a human in the loop are not yet good enough to do your job for you. Yes, Cursor was able to create an entire functional browser from scratch - if your definition of “functional” is the CI failing 88% of the time . Claude threw $20,000 and 16 Opus instances at the wall to create a compiler that doesn’t generalize past its test suite . You need to be good at software development in order to make the AI produce good software.

-

Delegate more tasks. AI works quietly in the background, chugging away at work until it’s time to ping back to home base. I wouldn’t trust it with making any executive decisions without human oversight, but there are areas of work that don’t require this: Code review, for example. Kick off a coding agent as soon as your backend goes down so its findings can help your humans get to a solution quicker. Configure Claude Code to periodically scan your codebase for vulnerabilities because let’s be real, you can’t be bothered to sift through all the alerts in

npm audityourself.

At any rate, writing the code just isn’t a big time sink anymore. With agents, you will be able to iterate much quicker through possible solutions. You can now experiment more easily with your code, or start debugging workflows in parallel. Code is, for better or for worse, free as in puppies now - Free to produce, still expensive to maintain.